Censored regression observes individuals with incomplete outcome data, while truncated regression excludes individuals outside a specified range entirely, leading to sample selection bias. The Tobit model addresses censored data, whereas truncated regression uses a different model, choosing only specific cases for analysis, evident in income studies.

Data Science

Step-by-Step Guide to Principal Component Analysis (PCA)

Principal Component Analysis (PCA) is a statistical method for dimensionality reduction that maintains data variability by transforming it into uncorrelated principal components. It involves standardizing data, computing the covariance matrix, and calculating eigenvalues/eigenvectors. PCA aids in noise reduction, visualization, and improving analyses, though it has limitations like linearity assumptions and information loss.

Understanding Gini Coefficient, AUC, and CAP

The Gini Coefficient, Cumulative Accuracy Profile (CAP), and AUC (Area Under the ROC Curve) are metrics used to evaluate classification models. CAP assesses model effectiveness via cumulative outcomes, AUC measures ranking ability, and Gini quantifies prediction power inequality. AUC relates to Gini, with higher values indicating better model performance.

Evaluating Predictive Model Performance: Key Metrics

Evaluating predictive model performance involves methods tailored to regression, classification, and clustering problems. Key metrics include MAE, RMSE, and R-squared for regression, and accuracy, precision, recall, and F1-score for classification. Business-based evaluations like cost-benefit analysis and A/B testing further inform decision-making about model deployment and effectiveness.

Standard Error Explained: Types & Formulas

The Standard Error (SE) measures the variability of a sample statistic relative to the true population parameter, helping assess accuracy in estimates like the mean, regression coefficients, or proportions. Various types, including the Standard Error of the Mean (SEM) and robust standard errors, account for reliability and data irregularities in statistical analysis.

Key Concepts in Theory of Probability Explained Simply

The content outlines key concepts in probability and random experiments, including randomness, mutually exclusive and exhaustive events, classical probability, conditional probability, and foundational theorems such as Bayes’ Theorem and the Law of Large Numbers. Each concept is illustrated with examples, emphasizing their significance in understanding probability theory.

Understanding Causal Inference: Key Concepts Explained

Causal inference determines if a cause-and-effect relationship exists between variables, distinguishing it from mere correlation. Key concepts include association vs. causation, confounding variables, and counterfactuals. Techniques like randomized experiments and matching help estimate causal effects. Despite challenges such as unmeasured confounders and selection bias, it’s essential for informed decision-making in various fields.

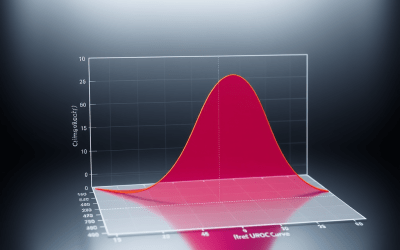

Logistic Regression Explained: Key Concepts and Tests

Logistic regression predicts binary outcomes based on predictor variables using the odds ratio and CDFs, such as the logistic function. It requires a non-linear model, utilizing MLE for estimation. Key metrics include Hosmer-Lemeshow Test, WOE, and PSI for model validation, ensuring good-bad separation and stability in populations.

Pearson vs. Spearman Correlation: A Comprehensive Guide

Correlation measures the relationship between two variables, indicating positive or negative associations. It can be linear or non-linear, computed using Pearson’s correlation coefficient formula. Alternatively, Spearman’s Rank correlation assesses associations among qualitative variables by ranking. Understanding these coefficients helps analyze data patterns accurately, while being cautious of spurious correlations.