The Expectation-Maximization (EM) algorithm is a two-step iterative method for estimating parameters in models with incomplete data. It addresses issues like missing values through an E-step (estimation) and M-step (maximization) process, ensuring non-decreasing likelihood, and is widely applicable in statistics and machine learning, despite some limitations.

Data Science

Time Series Modeling with State-Space and Hidden Markov Models: A Beginner’s Guide

This post explains State-Space Models and Hidden Markov Models (HMMs), which are crucial for analyzing dynamic processes with noisy or incomplete data. The State-Space Model captures latent processes over time, while HMMs deal with discrete hidden states. Both frameworks support real-time forecasting and are essential in fields like healthcare and finance.

K-Means Clustering: Finding Patterns Without Labels

K-means clustering identifies natural groupings in data without labels by grouping similar items based on distance metrics. The process involves initializing centroids, assigning data points, updating centroids, and repeating until stabilization. Although it’s fast and simple, K-means may struggle with non-convex clusters and is sensitive to initial conditions.

Difference-in-Differences (DiD) for Policy Evaluation: A Practical Guide

Evaluating the causal impact of public policies is crucial in social science. The Difference-in-Differences (DiD) method is a prominent technique for this, comparing outcome changes in treatment and control groups. Key aspects include the parallel trends assumption, proper group selection, and statistical rigor, all essential for accurate policy evaluation and interpretation.

Step-by-Step Guide to Propensity Score Matching with Python

Propensity Score Matching (PSM) is essential for reducing treatment assignment bias in observational studies, allowing effective estimation of causal effects. It matches individuals with similar characteristics based on propensity scores using methods like nearest neighbor matching. PSM’s efficacy relies on thorough covariate selection and balance checks to mitigate hidden biases.

Introduction to Instrumental Variables: Solving the Endogeneity Problem

The article discusses the challenges of endogeneity in regression models, which can bias ordinary least squares estimates. It introduces instrumental variables methods, particularly two-stage least squares, to obtain consistent causal estimates. Key elements include identifying valid instruments, testing their strength, and ensuring appropriate reporting of results to support robust analyses.

Understanding Fixed and Random Effects in Panel Data Analysis

In analyzing panel data—data that tracks multiple entities (like people, firms, or countries) across time—economists and data scientists often face a key modeling choice: Should I use a fixed effects model or a random effects model? Both models are designed to handle...

Plotting and Interpreting an ROC curve

The Receiver Operating Characteristic (ROC) curve evaluates binary classification tests by plotting the True Positive Rate against the False Positive Rate at various thresholds. Originating from signal detection theory in WWII, it highlights the trade-off between sensitivity and specificity. The Area Under Curve (AUC) quantifies overall accuracy, with values indicating performance quality.

Understanding Survival Analysis: Key Concepts Explained

Survival analysis evaluates the duration until events occur, such as death or failure, particularly when some data is censored. Key concepts include event tracking, censoring types, the survival function, and the hazard function. Methods like Kaplan-Meier and Cox regression analyze time-to-event data, applying in fields like medicine and engineering.

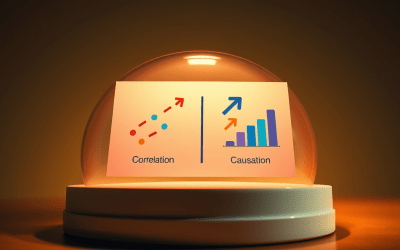

Causal Inference Techniques: ATE, ATT AND CATE

Causal inference assesses whether a treatment causes a change in outcomes instead of merely correlating them. It distinguishes between association and causation, explores counterfactuals, addresses confounding, and employs methods like ATE, ATT, and CATE to estimate effects, emphasizing the importance of statistical analysis in evaluating interventions.